HypCBC: Domain-Invariant Hyperbolic Cross-Branch Consistency

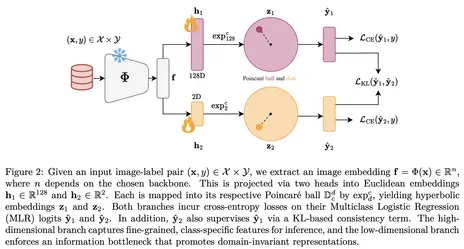

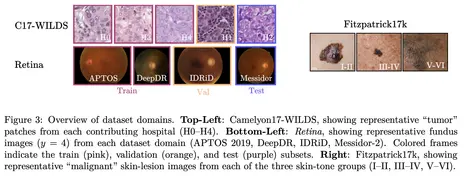

We propose HypCBC, a method that projects embeddings from a frozen foundation model into a hyperbolic space and learns them through a two-branch training strategy. The key idea is to combine a high-dimensional branch that captures fine-grained information with a low-dimensional branch that acts as an information bottleneck and encourages domain-invariant representations. A cross-branch consistency objective then transfers robust, domain-agnostic information between the two branches. Across eleven medical imaging datasets and three Vision Transformer backbones, hyperbolic embeddings consistently outperform Euclidean ones, and HypCBC further improves domain generalization on challenging benchmarks in dermatology, histopathology, and retinal imaging. The method is lightweight, backbone-agnostic, and particularly well-suited for robust medical AI under distribution shift.

About TMLR

Transactions on Machine Learning Research (TMLR) is a new venue for the dissemination of machine learning research that is intended to complement the Journal of Machine Learning Research (JMLR) while supporting the needs of a growing ML community.